What Are Leading Questions and How to Use Them In Assessments?

In assessment design, wording matters more than most people realize. A single phrase can subtly guide respondents toward a particular answer, shaping results without anyone noticing. That’s where leading questions come in.

If you’re building a student evaluation, a certification exam, or a workplace skills assessment, the way you frame questions directly impacts the quality of your data. Poorly constructed questions can introduce bias, skew results, and undermine trust in your findings.

In this post, we’ll explore what leading questions are, review practical leading question examples, explain the difference between leading vs loaded question examples, and share clear strategies to help you avoid bias in your assessments. By the end, you’ll know how to write stronger, more reliable assessment questions that deliver accurate insights.

What Are Leading Questions?

Leading questions are questions that suggest, nudge, or guide respondents toward a particular answer. They often imply that one response is more correct, desirable, or expected than others.

Instead of remaining neutral, leading questions influence:

- How respondents interpret the question

- What they believe the “right” answer should be

- How comfortable they feel choosing certain responses

Importantly, leading questions are often unintentional. Most assessment designers don’t set out to manipulate results. Bias usually slips in because of assumptions, enthusiasm for a program, or unclear objectives.

For example: “How helpful was our excellent onboarding training?”

The word “excellent” subtly pressures respondents to respond positively. Even if the training was ineffective, the wording signals what the designer expects to hear. Just by including one extra word, your data can become compromised.

Why Leading Questions Are a Problem in Assessments

Leading questions reduce the accuracy and reliability of assessment results. When respondents are guided toward specific answers, the data no longer reflects their true experiences or knowledge.

Here’s what can go wrong:

- Biased outcomes: Results skew in the direction suggested by the question.

- False confidence: Decision-makers trust data that isn’t fully accurate.

- Reduced validity: The assessment fails to measure what it’s intended to measure.

- Compromised fairness: Some respondents feel pressured or misunderstood.

In high-stakes environments like academic testing or workforce evaluations, this isn’t a minor issue. It can affect performance reviews, funding decisions, or professional advancement.

Organizations that rely on structured assessments, such as those built through platforms like Agolix, should ensure their question design supports fairness and clarity. Without neutral wording, even the best assessment platform can’t guarantee valid results.

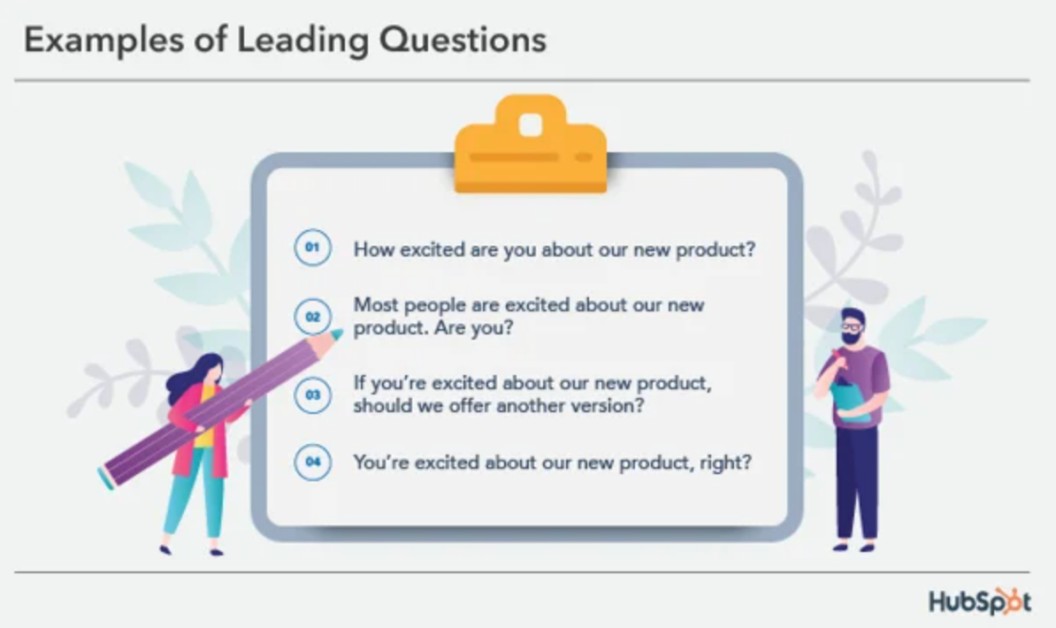

Leading Question Examples

Understanding leading question examples becomes much easier when you see how naturally they can slip into everyday assessment language. Most of the time, the person writing the question isn’t trying to bias the results. They’re simply reflecting their own expectations or experiences. This article in Forbes outlines some common ways companies ask leading questions, and how to avoid them.

You can also see below how this happens in real-world assessment examples and scenarios:

Example 1: Subtle Positive Bias

Imagine you’ve just launched a new training program. You’ve invested time, budget, and energy into making it successful. When it’s time to evaluate it, you ask: “How beneficial was the new training program?”

At first glance, the question seems harmless. When you look closely, though, it assumes the program was beneficial. It doesn’t leave much room for someone who found it confusing, irrelevant, or even counterproductive.

When respondents see that kind of phrasing, they may unconsciously adjust their answers. They might think, “Well, it must have been beneficial and I just didn’t see it clearly.” That tiny assumption can nudge them toward a more positive response.

A more neutral version would be: “How would you evaluate the new training program?”

This rewrite removes the built-in praise and invites a broader range of responses. Instead of signaling what the “right” answer should be, it opens the door to genuine feedback.

Example 2: Assumed Agreement

Consider this question: “Do you agree that the new assessment system improves productivity?”

This one goes a step further. It doesn’t just suggest something positive; it assumes consensus. The phrase “do you agree” implies that improvement is already established and widely accepted.

In a workplace setting, that wording can create subtle social pressure. Employees might worry that disagreeing makes them look resistant to change or out of step with leadership. Even if productivity hasn’t improved, they may hesitate to challenge the premise.

A more neutral alternative might be: “How, if at all, has the new assessment system affected productivity?”

The difference here is that the revised question doesn’t assume improvement. It allows for positive, negative, or no impact at all. That flexibility is essential in assessments where accuracy matters more than affirmation.

Example 3: Emotionally Framed Language

Emotional wording can be just as influential. Take this question: “How frustrating was the outdated reporting process?”

The word frustrating carries emotional weight. Even respondents who felt neutral about the process may pause and reconsider. Emotionally charged language subtly shapes memory and perception. Instead of measuring experience, the question begins shaping it.

A more neutral option would be: “How would you describe your experience with the previous reporting process?”

This phrasing removes the emotional cue and lets respondents define the experience in their own terms.

These leading question examples demonstrate how small wording choices can influence responses in ways that aren’t immediately obvious.

Leading vs. Loaded Questions: What’s the Difference?

It’s easy to confuse leading and loaded questions, because both introduce bias. However, they operate in slightly different ways, and understanding that distinction is critical in assessment design.

Leading questions gently guide respondents toward a preferred answer.

Loaded questions, on the other hand, contain built-in assumptions or judgments that trap respondents in a defensive position.

Looking at clear leading vs loaded question examples helps make this distinction concrete.

Leading Question Example

Let’s start with the question: “How effective was our new compliance program?”

This question assumes effectiveness. It doesn’t explicitly say the program worked, but it strongly implies it did. The respondent may feel uncomfortable answering “It wasn’t effective at all,” because the wording frames effectiveness as a given.

The bias is subtle here. The suggestion is implied, rather than forced.

Loaded Question Example

Now, compare that to: “Why did you ignore the compliance training requirements?”

This question assumes that the respondent ignored the requirements. That’s what makes loaded questions particularly problematic. They don’t just guide the answer, but they corner the respondent. Instead of measuring knowledge or behavior, the question creates pressure and defensiveness.

As Cornell University’s Center for Teaching Innovation explains, loaded questions may cause respondents to guess at what you want them to say rather than tell you what they think. They often produce unreliable data because respondents react to the question, rather than thoughtfully answer it..

In assessment contexts, especially those tied to performance, certification, or compliance, this distinction matters enormously. Leading questions quietly influence, while loaded questions overtly assume.

5 Common Causes of Leading Questions

Leading questions rarely show up because someone intends to bias the results. More often, they grow out of completely understandable human tendencies. We all bring assumptions, expectations, and preferences into our work, and those perspectives can quietly shape the way we write assessment questions.

1. The Desire to Confirm Existing Beliefs

One of the biggest drivers of leading questions is confirmation bias. When a team strongly believes in a program or outcome, that belief can quietly shape how questions are framed.

For example, imagine a team that has spent months building a new training curriculum. They’ve invested significant time and resources, and naturally, they expect it to perform well. When it’s time to evaluate it, their confidence can sneak into the wording:

Instead of exploring outcomes neutrally, they may write questions that assume success. Without realizing it, they shift from measuring results to confirming expectations.

2. Poorly Defined Assessment Goals

Another frequent issue is poorly defined assessment goals.

When assessment objectives aren’t clearly defined, question writers often fill in the gaps themselves… and that’s where bias creeps in.

If the goal of an assessment isn’t tied to specific, measurable outcomes, questions tend to drift toward interpretation rather than observation. This is when phrases like “How effective…” or “How successful…” start appearing unchecked.

Clear goals act as guardrails. Without them, assumptions take over.

3. Overly Positive or Negative Language

Overly positive or negative language is another culprit; and tone plays a bigger role than most people expect. Writers often bring emotional context into their wording, especially when they feel strongly about the subject.

If they’re proud of a program, they may lean into overly positive language. If they’re critical, the wording may skew negative. In both cases, the result is the same: the question begins to guide the response.

4. Writing Too Close to the Desired Outcome

Sometimes, leading questions emerge because the writer is too focused on proving a specific result.

For instance, if the goal is to demonstrate that a new system improves performance, questions may be framed in a way that subtly reinforces that conclusion. Instead of asking what happened, the questions imply what should have happened.

At that point, the assessment stops being exploratory and starts functioning as validation, which undermines its reliability.

5. Lack of Peer Review or Pilot Testing

Finally, lack of peer review or pilot testing allows bias to slip in unnoticed. Even well-intentioned questions can carry hidden bias, especially when they’re written in isolation.

When one person drafts an assessment without feedback, assumptions often go unnoticed. What feels neutral to the writer may come across as leading to someone else.

That’s why peer review and pilot testing are so important. A fresh perspective can quickly identify:

- Assumptions embedded in wording

- Emotionally charged language

- Questions that feel leading or restrictive

This is where structured design processes make a real difference. Platforms that support careful alignment with learning objectives and collaborative review, like Agolix’s assessment management tools, can help reduce bias before assessments ever reach respondents.

Clear workflows and objective alignment don’t just improve efficiency; they protect the integrity of your data.

How to Identify Leading Questions in Your Assessments

Identifying leading questions requires stepping back from your work and reading it as a neutral observer. It’s not always obvious at first glance. In fact, the more familiar you are with the content, the easier it is to overlook subtle bias.

1. Look for Assumptions

Scan your questions for words that imply a “correct” answer. Certain phrases quietly imply that a specific answer is correct or expected, like those that begin “How effective…,” “How beneficial…,” or “How successful…” These assume a positive outcome before the respondent has had a chance to evaluate the question.

When reviewing your questions, pause and ask yourself: “Am I assuming a result?” If the question already contains the answer, it’s likely leading.

2. Watch for Emotional or Evaluative Language

Another important checkpoint is emotional or evaluative language. Words such as “excellent,” “frustrating,” “outstanding,” or “poor” carry judgment.

Even if the intent is descriptive, these adjectives shape perception before the respondent has formed their own response.

3. Test With Neutral Rewrites

Another helpful technique is to test your questions with neutral rewrites. For example:

Original: “How helpful was the mentoring program?”

Neutral rewrite: “How would you rate the mentoring program?”

When you compare the two versions, notice how the revised question feels more open-ended. It doesn’t assume helpfulness; it simply asks for evaluation. If the rewritten version broadens the range of possible responses, the original likely contained subtle bias.

This practice of rewriting can be surprisingly revealing. It forces you to separate measurement from interpretation, and check whether your language is shaping the answer.

4. Incorporate Peer Review

For teams building assessments collaboratively, peer review is also valuable. Structured workflows encourage multiple perspectives during the design process. When colleagues review questions together, they’re more likely to catch assumptions, emotional cues, or phrasing that nudges respondents in unintended directions.

Ultimately, identifying leading questions isn’t about perfection; it’s about awareness. The more intentionally you review your wording, the more reliable and trustworthy your assessment results will be.

How to Avoid Leading Questions in Assessments

Avoiding leading questions isn’t complicated, but it does require discipline. Preventing bias comes down to taking your time, reviewing carefully, and choosing words with purpose.

Use Neutral, Balanced Language

The first step is committing to neutral, balanced language. When writing assessment questions, it’s tempting to evaluate as you measure. However, those are two different actions. Strong assessment design focuses on observable behaviors rather than judgments.

For example, asking, “How well did the candidate perform?” already frames performance as something to be judged on a positive scale. It subtly suggests that “well” is the expected direction.

A more balanced alternative might be, “Which of the following best describes the candidate’s performance?”

That version shifts the focus from implied praise to structured observation. It invites description rather than affirmation.

Ask About Experience, Not Interpretation

Another helpful shift is asking about experience instead of interpretation. Interpretation invites opinion, and opinion can be easily influenced by suggestions. Experience, on the other hand, is grounded in facts.

Consider the question, “Did the training improve your confidence?” It assumes improvement and asks respondents to reflect on a subjective internal feeling. While confidence may be important, the wording blends outcome with assumption.

A more neutral angle might be, “How many training sessions did you complete?” or “Which topics were covered during your training?”

These types of questions collect concrete data first. Interpretation can follow later, but it shouldn’t be built into the measurement itself.

Separate Measurement From Judgment

It’s important to separate measurement from judgment. When a question implies success or failure, it pressures respondents to align with that framing.

Asking, “How successful was your project?” assumes that success is the standard for evaluation. A more neutral approach would be, “What were the outcomes of your project?”

This phrasing leaves room for a range of results across positive, negative, or mixed, without signaling which one is preferred.

Pilot and Review Questions

Finally, no assessment should go live without some form of pilot testing and review. Even experienced assessment designers can overlook subtle bias in their own writing. A small test group can quickly surface unclear wording or hidden assumptions. During a pilot phase, you can:

- Test questions with a small, representative group

- Ask participants whether any wording felt leading or unclear

- Look for patterns in responses that suggest misunderstanding

- Revise questions based on feedback

Often, what feels neutral to the writer reads differently to someone encountering the question for the first time. That outside perspective is invaluable.

In the end, avoiding leading questions is less about memorizing rules and more about adopting a mindset. When clarity, fairness, and accuracy guide your writing process, neutrality becomes a habit rather than an afterthought.

When Leading Questions Might Appear Acceptable and Why to Be Cautious

In marketing or persuasive communication, leading questions can be strategic.

For example: “Wouldn’t you agree this solution simplifies your workflow?”

However, in assessments, persuasion undermines validity. Even when leading questions seem harmless, they can compromise ethical and methodological standards.

In educational and professional testing environments, fairness and neutrality are essential. Biased questions don’t just affect scores, they affect trust.

If your organization uses assessments for certification, evaluation, or compliance tracking, neutral question design isn’t optional, it’s foundational.

Rewriting Leading Questions: 3 Before and After Examples

Sometimes, improving an assessment question doesn’t require a complete rewrite. In many cases, a few carefully chosen word changes can dramatically strengthen the quality of your data. The difference between a biased question and a neutral one often comes down to a single adjective or assumption.

1. Remove Implied Praise

Consider this question: “How excellent was the instructor’s presentation?”

At first glance, it sounds encouraging. But the word excellent already tells respondents how the writer views the presentation. That built-in praise can make people less likely to give critical feedback, even if their experience was only average.

A stronger alternative would be: “How would you rate the instructor’s presentation?”

This revision removes the implied compliment and invites a more honest evaluation. It doesn’t suggest what the answer should be; it simply asks for one.

2. Remove Assumptions About Outcomes

Here’s another common scenario: “Do you agree that the new system saves time?”

This question assumes the system saves time and positions agreement as the expected response. The phrase “do you agree” implies consensus, which can create social pressure, especially in organizational settings where leadership supports the new system.

A more neutral alternative would be: “What impact, if any, has the new system had on your workflow?”

Notice how this version allows for multiple possibilities. The impact could be positive, negative, or nonexistent. By removing the assumption, you collect data instead of affirmation.

3. Replace Judgment With Open Measurement

Finally, consider this example: “How confusing was the previous policy?”

The word confusing frames the policy negatively before the respondent has evaluated it. Even someone who found it clear may pause and wonder whether they overlooked something.

A more balanced approach might be: “How would you describe your experience with the previous policy?’”

This open-ended phrasing removes the emotional cue entirely, leaving respondents free to characterize their experience in their own terms—whether positive, negative, or neutral. It measures perception without steering it.

Across all these examples, the pattern is clear: the revised versions remove assumptions and emotional cues. They shift from implied evaluation to open measurement.

The result? Cleaner, more reliable, and more defensible data.

6 Best Practices for Writing Fair, Unbiased Assessment Questions

Writing fair assessment questions isn’t about following a rigid formula. It’s about developing consistent habits that protect objectivity. Strong assessment design blends structure with thoughtful reflection, making it both a science and an art.

To consistently avoid leading questions, follow these principles:

- Align each question with a single, clear objective: When a question tries to measure multiple ideas at once, bias and ambiguity creep in. Clear alignment keeps wording focused and purposeful.

- Use consistent wording across similar questions: When similar questions use dramatically different phrasing, respondents may interpret them differently. Maintaining consistent wording patterns reduces confusion and supports more reliable comparisons across responses.

- Avoid absolutes and value-laden adjectives: These words rarely reflect real-world experiences and can push respondents toward extreme answers. Likewise, removing value-laden adjectives such as “excellent,” “poor,” “outstanding,” or “ineffective” helps prevent subtle steering.

- Conduct peer review before deployment: No assessment should rely on a single perspective. Peer review before deployment creates an opportunity for others to question assumptions you may not see. Colleagues can often spot emotionally charged language or implied conclusions that slipped past the original writer.

- Pilot test whenever possible: Whenever possible, pilot testing adds another layer of protection. Testing your questions with a small audience allows you to identify confusion, unintended bias, or inconsistent interpretation before full rollout.

Review through a bias-reduction lens: Finally, reviewing every question through a bias-reduction lens should become standard practice. Ask yourself: Does this question assume an outcome? Is any wording emotionally charged? Am I measuring observation or implying judgment?

When these checks become routine, fairness becomes built into the process rather than added at the end.

Assessment design is both a science and an art. The more intentional your process, the stronger your outcomes.

Better Questions Lead to Better Data

At the end of the day, better questions produce better data, and better data leads to smarter decisions.

Thoughtful wording builds credibility, and neutral language strengthens validity. Unbiased assessments ensure that results truly reflect performance, not persuasion.

When your questions are clear and balanced, your data becomes something you can stand behind with confidence.

Agolix is built to support exactly that kind of intentional assessment design — with tools that help you structure questions, reduce bias, and deliver results you can trust. Ready to build assessments that truly reflect what you’re measuring? Get started today with Agolix!